Skills are often described as "plugins for agents," but the interesting part is in the operational model: discovery, metadata loading, matching, activation, and optional script execution. This article offers a practical view of that lifecycle using the Agent Skills specification, along with a small case study you can replicate. The goal is to make skills feel concrete: what goes inside a SKILL.md, how to maintain them in an orderly way, and how to prevent your skill library from becoming another unversioned prompt dump.

A skill is a markdown file in a folder. That's it.

No binary plugin, no running server, no wire protocol. A directory with a SKILL.md containing YAML frontmatter and instructions. If you can write a README, you can write a skill. The format is so deliberately simple that it tends to disappoint people who expect more ceremony from something called an "extension layer."

But the simplicity is the point. The value of skills isn't in the file format; it's in the discovery-routing-execution pipeline that agents build around these files. Understanding that pipeline is what separates someone who downloads skills from marketplaces and hopes they work from someone who can write, debug, and maintain them effectively.

Note on examples: This article uses Claude Code for concrete examples (file paths, frontmatter fields, invocation). Agent Skills are an open standard supported by 30+ tools. The same skill works across all of them. Only the installation path changes:

The SKILL.md format and frontmatter are the same everywhere. Check your tool's docs for the exact path.

The Agent Skills format is an open specification, originally developed by Anthropic and now adopted by over 30 tools: Claude Code, Cursor, VS Code (Copilot), GitHub Copilot, Gemini CLI, OpenAI Codex, Goose, Kiro, Roo Code, JetBrains Junie, Spring AI, Databricks, and others. The broad adoption matters because it means skills are portable, a skill written for one agent works across the ecosystem.

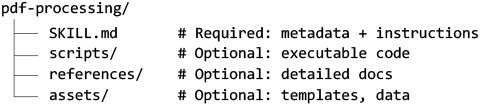

At the specification level, a skill is a directory:

The SKILL.md has two parts. The YAML frontmatter declares metadata:

The specification requires two fields: name (up to 64 lowercase characters, hyphens allowed) and description (up to 1024 characters). That's the mandatory surface. Everything else, license, compatibility, metadata, allowed-tools, is optional.

Below the frontmatter, the markdown body contains the actual instructions. No restrictions on format, length, or structure. Step-by-step workflows, examples, edge cases, whatever helps the agent perform the task. The recommendation is to keep it under 500 lines and move detailed reference material to separate files.

Individual tools extend the base specification with their own fields. Claude Code, for instance, adds disable-model-invocation, user-invocable, context, agent, model, effort, and hooks. These control whether the user or Claude can invoke the skill, whether it runs in a forked subagent, what model to use, and what lifecycle hooks apply. Other tools add their own extensions while preserving the core schema.

The concept that makes skills work is progressive disclosure: showing just enough information to help the agent decide what to do, then revealing more as needed. This plays out across three loading levels:

This three-level structure is what keeps agents fast while still giving them access to deep context on demand.

Discovery is filesystem-based. The agent scans known directories:

No registry, no build step, no compilation. Installing a skill is copying a folder to the right place.

Here's where it gets interesting: there is no algorithmic routing. The system formats skill names and descriptions into a text block, injects it into the prompt, and lets the LLM decide which skill applies.

No embeddings. No classifiers. No pattern matching at the code level. The decision happens entirely within the model's forward pass. Claude reads "Extract PDF text, fill forms, merge files. Use when handling PDFs" and matches it against "I need to extract tables from this PDF."

The implication is significant: the description field is the most important thing you write in a skill. If your skill doesn't trigger, it's almost never the instructions. It's the description. A description that says "Helps with PDFs" won't match as reliably as one that lists specific actions and trigger contexts.

Once the LLM selects a skill, the agent:

From this point, the agent follows the skill's instructions using its available tools. If the skill references a script in scripts/validate.sh, the agent reads and executes it. If it points to references/API.md, the agent loads that file into context. The skill's instructions orchestrate; the agent's tools execute.

Skills can also inject dynamic context through shell preprocessing. The syntax !gh pr diff`` runs the command before sending content to the agent, so Claude receives actual PR data rather than a placeholder. This turns skills into live-context templates.

When Agent Skills were released as an open standard in December 2025, a vocal segment of the community declared that MCP was dead. Skills replace MCP servers. This reflects a genuine confusion that's worth unpacking because it affects real architectural decisions.

The confusion has legitimate roots. For certain workflows that previously required an MCP server, a skill with CLI access achieves the same result. Before, you might have written an MCP server connecting to a CRM API to format reports. Now, a skill with formatting rules plus allowed-tools: Bash(gh:*) can do the job through the CLI.

Simon Willison observed that "almost everything I might achieve with an MCP can be handled by a CLI tool instead." Skills make this pattern explicit: the skill says what to do, and CLI tools (accessible through the agent's Bash tool) do it. For anything with a good CLI, the MCP server becomes optional.

But the overlap has limits. MCP and skills operate at different layers:

The simple rule: if you need the agent to know how to do something, write a skill. If you need the agent to access something external, use MCP. Most real-world workflows benefit from both: MCP for connectivity, skills for methodology.

The teams that burned weeks building the wrong type of integration (and it happened) could have avoided it with this distinction. A skill can't query your production database. An MCP server shouldn't encode your team's code review conventions into its tool descriptions. Different problems, different tools.

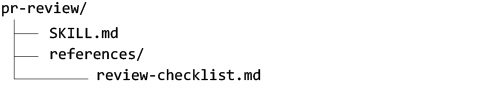

Here's a concrete, complete skill that teaches an agent to review pull requests following a specific methodology. It's deliberately small to make the structure clear.

SKILL.md:

references/review-checklist.md:

That's 30 lines of SKILL.md plus a checklist. To install it: copy the pr-review/ folder to ~/.claude/skills/. To use it: type /pr-review or just ask "review this PR." The description will match.

The progressive disclosure is visible: at startup, only the name and description are in context. When activated, the full SKILL.md loads. The review-checklist.md loads only if Claude reaches step 3 and follows the reference link.

Skills.sh, operated by Vercel, lists 89,753 skills. Other marketplaces (claudeskills.info, LobeHub, curated lists on GitHub) add thousands more. Installation is a one-liner: npx skillsadd <owner/repo>. This accessibility is great for discoverability. It's terrible for quality assurance.

When Tim from TimOnWeb evaluated five security-focused skills for Claude Code, he found that only one was worth installing. The most popular skill (1,600+ installs) was bundled inside an aggregator of 900+ skills; its install count reflected distribution, not quality. Most of the evaluated skills were static checklists that flagged settings.API_URL as a potential SSRF vulnerability because they couldn't distinguish server configuration from user input. One skill was a verbatim copy of another, redistributed without additions.

The one that worked, Sentry's security-review skill, succeeded because it encoded methodology, not just a checklist. It had a confidence classification system (HIGH/MEDIUM/LOW), framework-specific awareness (Django, Node, Go, Rust), data-flow analysis that traced input sources, and 17 reference documents covering different vulnerability classes. It changed how Claude reasoned about security rather than giving it a list to pattern-match against.

This is the difference between a skill and a prompt dump. And it's invisible if you sort by install count.

A growing number of developers ask an LLM to write their skills: "Write me a skill that does X." The LLM produces a syntactically correct SKILL.md with generic instructions. It works, sort of. But it typically generates vague descriptions (poor routing), generic instructions (no domain depth), no progressive disclosure structure (everything in one file), and no supporting references or scripts.

The result is a skill that technically loads but doesn't perform meaningfully better than just typing the instruction directly. The value of a well-crafted skill comes from domain knowledge that the LLM doesn't have: your team's specific conventions, your project's edge cases, the framework-specific patterns that matter in your codebase. A skill is only as good as the expertise encoded in it.

This doesn't mean you shouldn't use LLMs to help draft skills. But treating the generated output as the final product, without adding your domain-specific knowledge, reviewing the description for routing quality, or structuring progressive disclosure, produces skills that are noise, not signal.

A skill is a markdown file. It takes two minutes to read. Before installing any skill:

The install count tells you how many people copied a folder. It doesn't tell you if the folder contains anything useful.

Skills are not magic. They're structured context with a discovery pipeline. A SKILL.md doesn't execute anything; it tells an agent how to approach a problem, and the agent uses its own tools to do the work.

The format's simplicity is its defining feature. A skill is portable (30+ tools support it), transparent (you can read the entire thing in minutes), and versionable (it's a file in a directory, so git works). The progressive disclosure model keeps the context budget manageable even with large skill libraries. And the LLM-based routing, while dependent on good descriptions, eliminates the need for complex matching infrastructure.

The practical advice is straightforward: write descriptions like you're writing search keywords for your future self. Keep SKILL.md under 500 lines and push detail into reference files. Use allowed-tools to scope permissions minimally. Use disable-model-invocation for anything with side effects. And read skills before you install them, because a markdown file only takes two minutes, and install count is a distribution metric, not a quality metric.